Production AI

Across Models and Providers

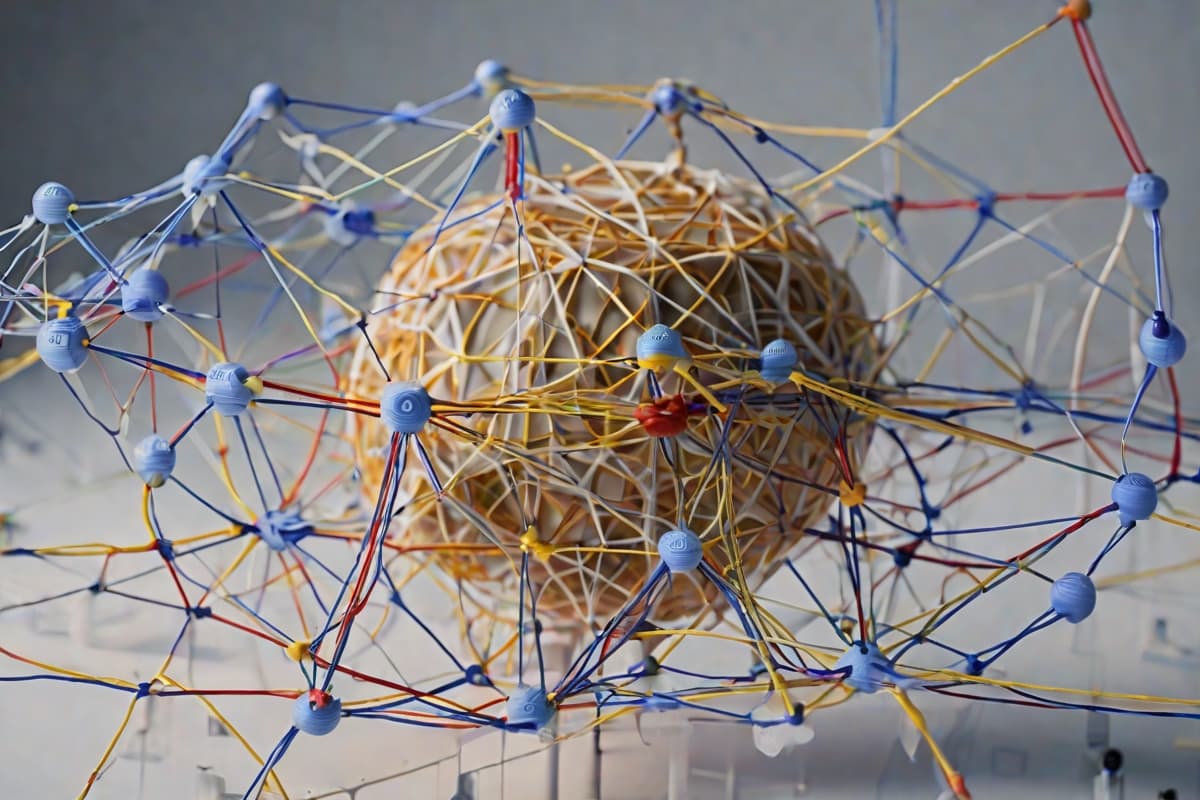

Multi-model routing, cost-aware provider selection, and agentic workflow orchestration — so AI in production is not a one-vendor bet.

AI infrastructure that abstracts the provider — so you are not locked into one

AI that manages AI

The same orchestration platform we run in production — routing tasks across providers by complexity, cost, and compliance boundary.

Architecture proven in Logical Front's own production AI operations — not a reference design.

AI Without Provider Lock-In

The AI provider landscape is moving fast. Models improve, prices drop, new providers emerge. Orchestrating across providers lets you route by capability, cost, or compliance — and swap models without rewriting your applications.

Learn more

Multi-Model Routing

Route requests to the right model based on task complexity, cost constraints, or compliance boundary — abstracting provider-specific APIs.

Cost-Aware Operations

Rate limits, spend tracking, and automatic model downgrades when cost windows tighten — control the invoice before it happens.

Our Expertise

LLMOps from teams running production AI

We operate AI orchestration for our own platform and customer deployments — real production experience, not just reference architectures.

AI Platform Engagements

Orchestration Platform Design

Design multi-model orchestration aligned to your workload, cost, and compliance requirements.

Learn moreAgent Workflow Build

Build production agentic workflows with orchestration, tool use, and human approval.

Learn moreLLMOps Managed Service

Managed operations of your AI orchestration platform — routing, evaluation, and governance.

Learn more